Abstract

Multi-object tracking and segmentation (MOTS) is important for understanding dynamic scenes in video data. Existing methods perform well on multi-object detection and segmentation for independent video frames, but tracking of objects over time remains a challenge. MOTS methods formulate tracking locally, i.e., frame-by-frame, leading to sub-optimal results. Classical global methods on tracking operate directly on object detections, which can lead to a combinatorial growth in the detection space. To address these issues, we formulate a global method for MOTS over the space of assignments rather than detections. In step 1 of our two step method we find all top-k assignments of objects detected and segmented between any two consecutive frames. We then develop a structured prediction formulation to score assignment sequences across any number of consecutive frames and use dynamic programming to find the global optimizer of the structured prediction formulation in polynomial time. In step 2 we connect objects which reappear after having been out of view for some time. For this we formulate an assignment problem. On the challenging KITTI-MOTS and MOTSChallenge datasets, this method achieves state-of-the-art results among methods which don’t use depth information.

Qualitative Results

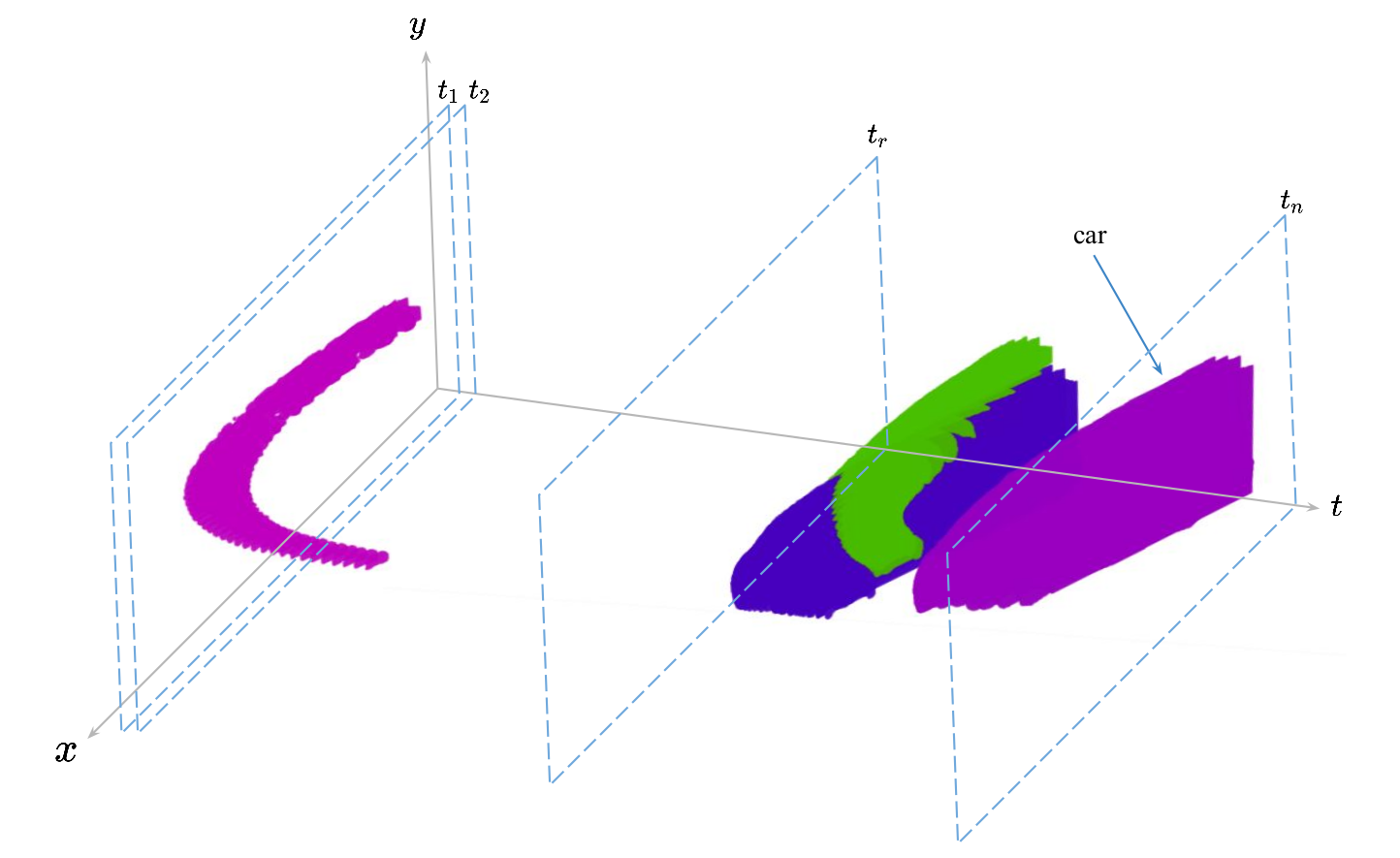

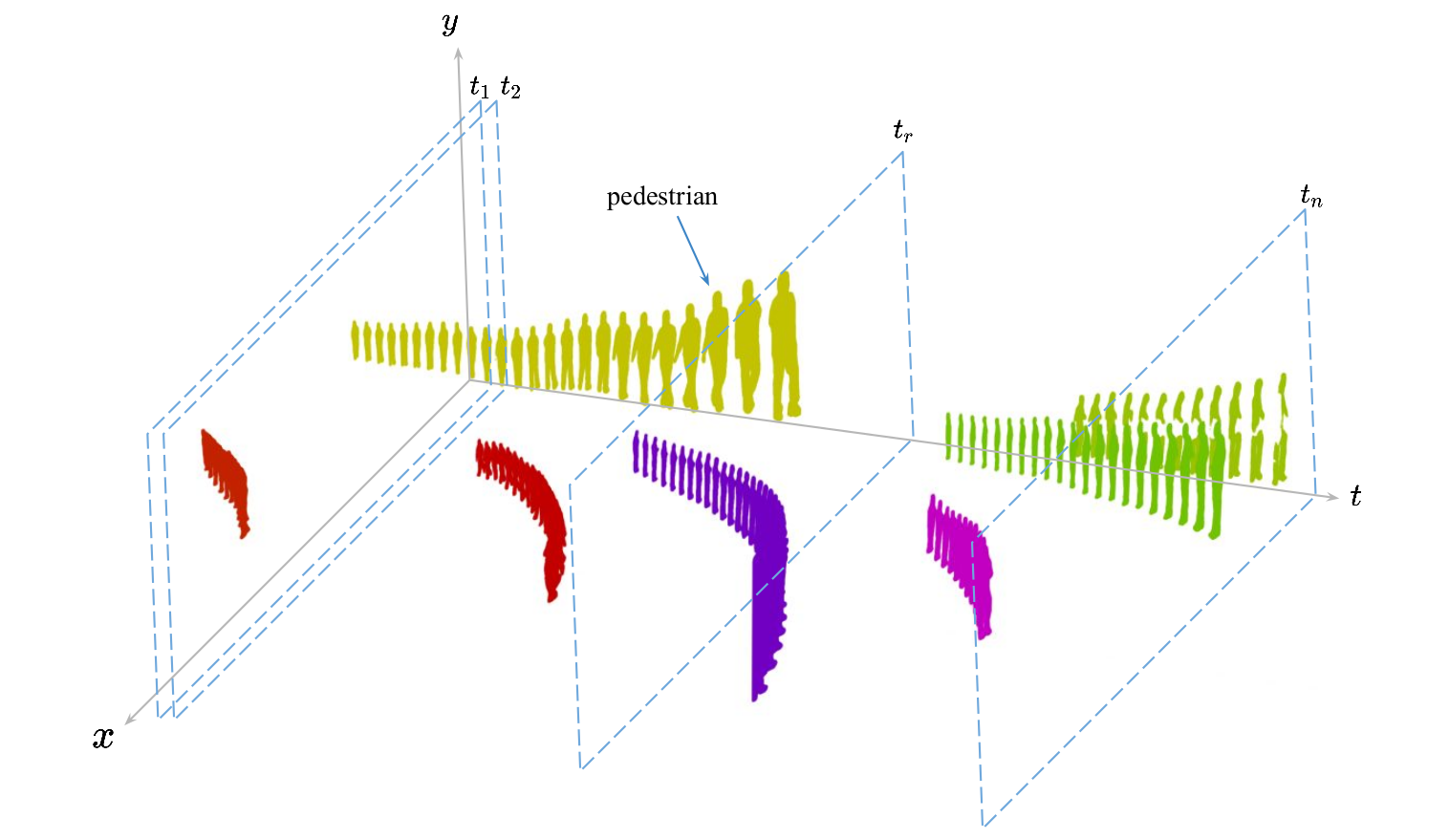

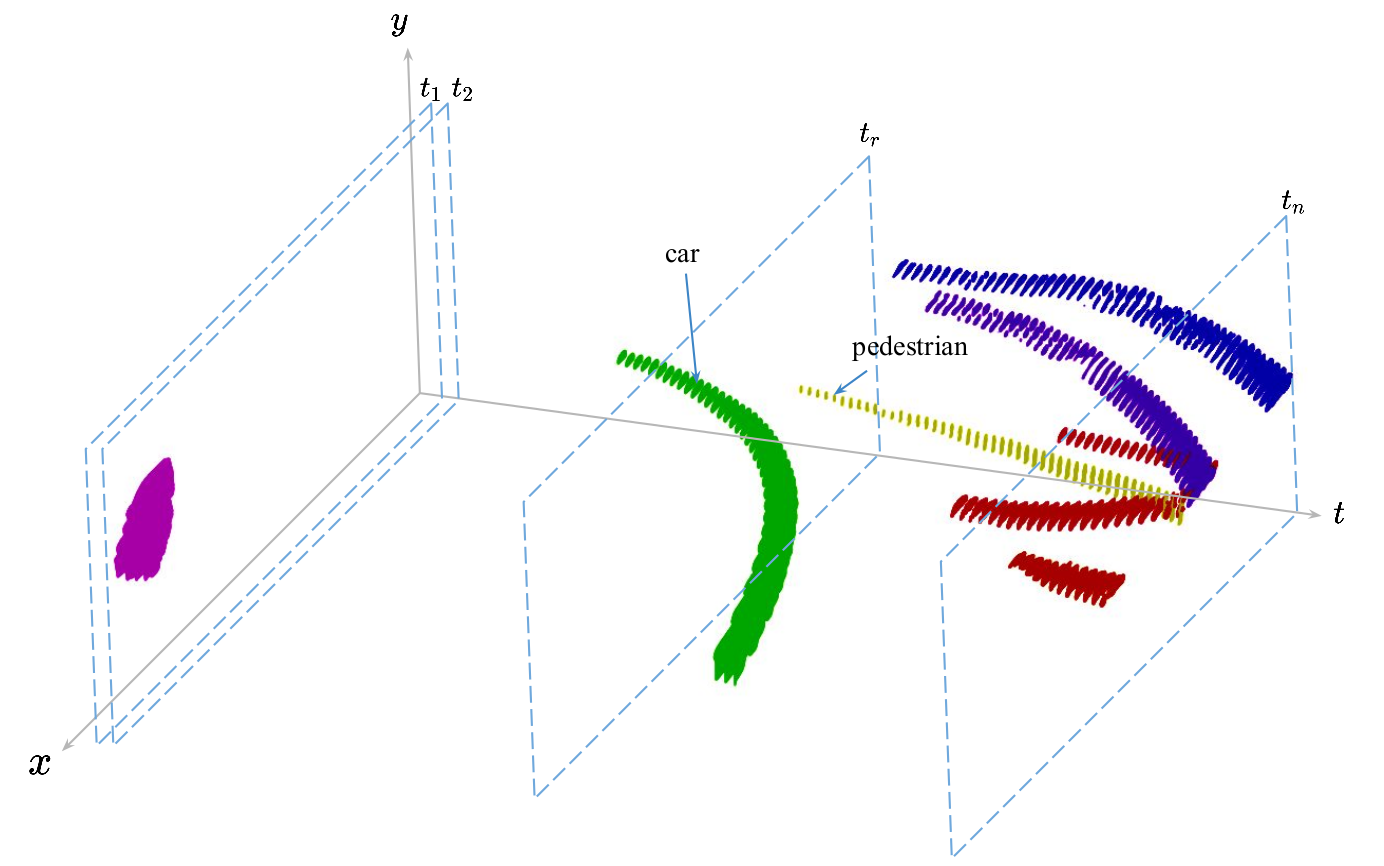

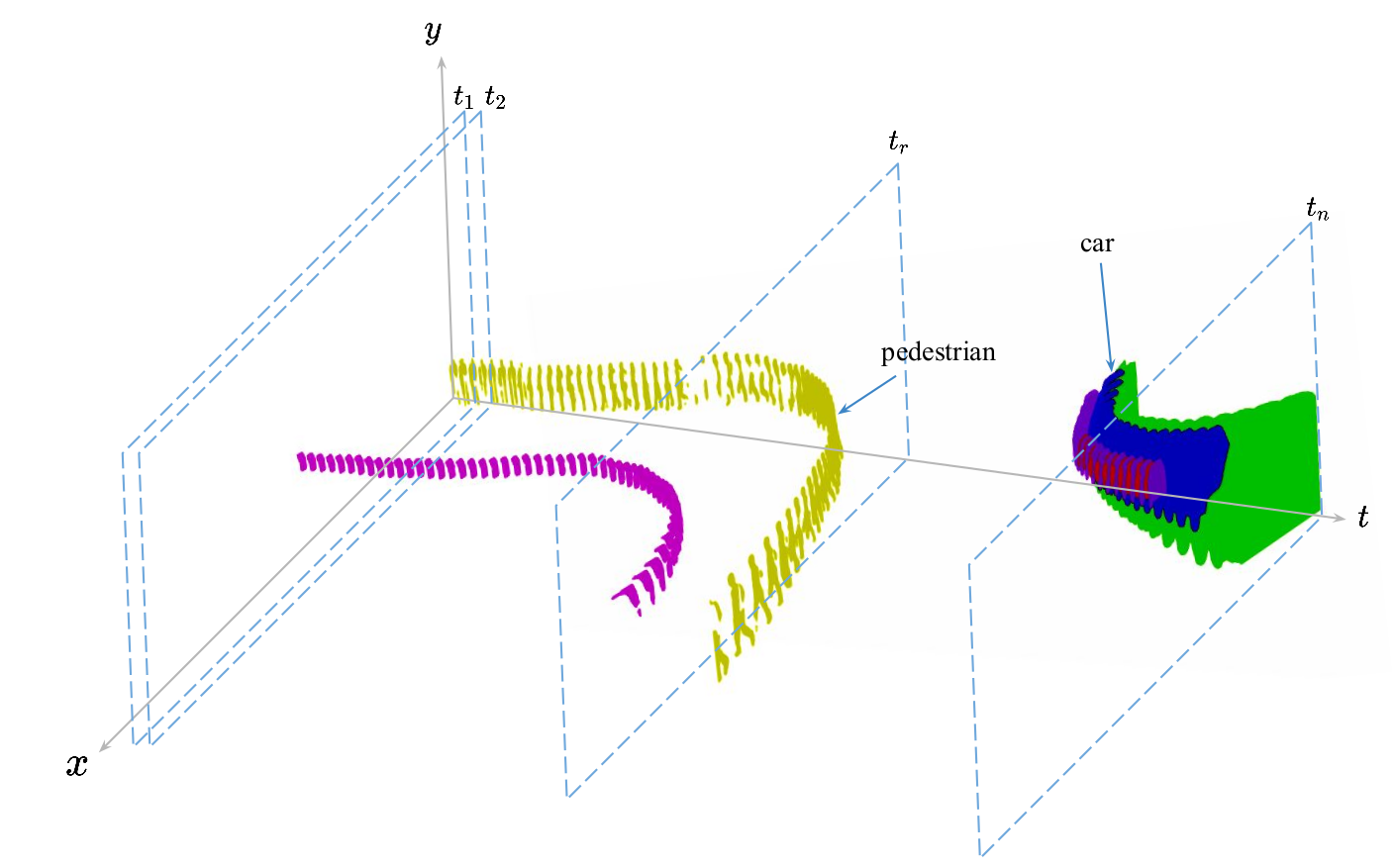

Visualization of Tracks

Acknowledgement

This work is supported in party by Agriculture and Food Research Initiative (AFRI) grant no. 2020-67021-32799/project accession no.1024178 from

the USDA National Institute of Food and Agriculture: NSF/USDA National AI Institute: AIFARMS. We also thank the Illinois Center for Digital Agriculture for seed funding for this project.